GitLab Duo

- First GitLab Duo features introduced in GitLab 16.0.

- Removed third-party AI setting in GitLab 16.6.

- Removed support for OpenAI from all GitLab Duo features in GitLab 16.6.

GitLab is creating AI-assisted features across our DevSecOps platform. These features aim to help increase velocity and solve key pain points across the software development lifecycle.

| Goal | Feature | Tier/Offering/Status |

|---|---|---|

| Helps you discover or recall Git commands when and where you need them. | Git suggestions | Ultimate SaaS Experiment |

| Assists with quickly getting everyone up to speed on lengthy conversations to help ensure you are all on the same page. | Discussion summary | Ultimate SaaS Experiment |

| Generates issue descriptions. | Issue description generation | Ultimate SaaS Experiment |

| Helps you write code more efficiently by viewing code suggestions as you type. Watch overview | Code Suggestions | For SaaS: All tiers Beta For self-managed: Ultimate Beta |

| Automates repetitive tasks and helps catch bugs early. | Test generation | Ultimate SaaS Experiment |

| Generates a description for the merge request based on the contents of the template. | Merge request template population | Ultimate SaaS Experiment |

| Assists in creating faster and higher-quality reviews by automatically suggesting reviewers for your merge request. Watch overview | Suggested Reviewers | Ultimate SaaS |

| Efficiently communicates the impact of your merge request changes. | Merge request summary | Ultimate SaaS Experiment |

| Helps ease merge request handoff between authors and reviewers and help reviewers efficiently understand suggestions. | Code review summary | Ultimate SaaS Experiment |

| Helps you remediate vulnerabilities more efficiently, boost your skills, and write more secure code. Watch overview | Vulnerability summary | Ultimate SaaS Beta |

| Generates a merge request containing the changes required to mitigate a vulnerability. | Vulnerability resolution | Ultimate SaaS Experiment |

| Helps you understand code by explaining it in English language. Watch overview | Code explanation | Ultimate SaaS Experiment |

| Processes and generates text and code in a conversational manner. Helps you quickly identify useful information in large volumes of text in issues, epics, code, and GitLab documentation. | GitLab Duo Chat | Ultimate SaaS Beta |

| Assists you in determining the root cause for a pipeline failure and failed CI/CD build. | Root cause analysis | Ultimate SaaS Experiment |

| Assists you with predicting productivity metrics and identifying anomalies across your software development lifecycle. | Value stream forecasting | Ultimate All offerings Experiment |

Enable AI/ML features

- Experiment and Beta features

- All features categorized as Experiment features or Beta features (besides Code Suggestions) require that this setting is enabled at the group level.

- Their usage is subject to the Testing Terms of Use.

- Experiment and Beta features are disabled by default.

- This setting is available to Ultimate groups on SaaS and can be set by a user who has the Owner role in the group.

- View how to enable this setting.

- Code Suggestions

- View how to enable for self-managed.

- View how to enable for SaaS.

Experimental AI features and how to use them

The following subsections describe the experimental AI features in more detail.

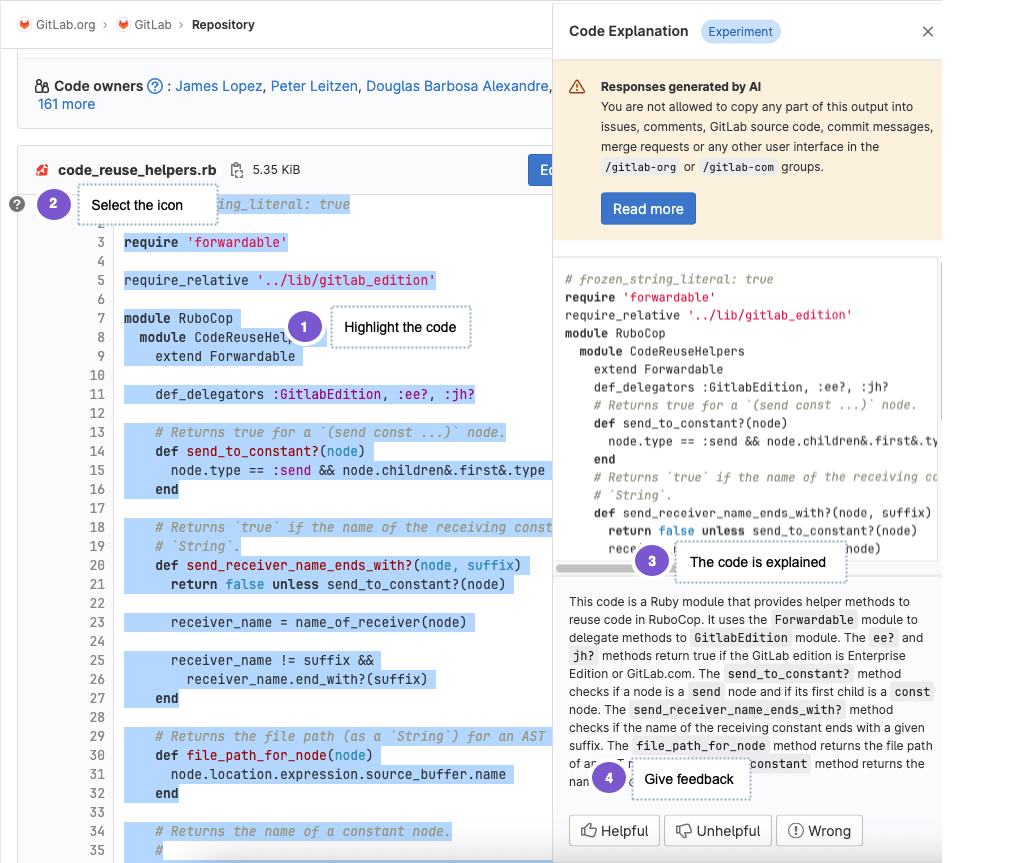

Explain code in the Web UI with Code explanation Ultimate SaaS Experiment

Introduced in GitLab 15.11 as an Experiment on GitLab.com.

To use this feature:

- The parent group of the project must:

- Enable the experiment and beta features setting.

- You must be a member of the project with sufficient permissions to view the repository.

GitLab can help you get up to speed faster if you:

- Spend a lot of time trying to understand pieces of code that others have created, or

- Struggle to understand code written in a language that you are not familiar with.

By using a large language model, GitLab can explain the code in natural language.

To explain your code:

- On the left sidebar, select Search or go to and find your project.

- Select any file in your project that contains code.

- On the file, select the lines that you want to have explained.

- On the left side, select the question mark (). You might have to scroll to the first line of your selection to view it. This sends the selected code, together with a prompt, to provide an explanation to the large language model.

- A drawer is displayed on the right side of the page. Wait a moment for the explanation to be generated.

- Provide feedback about how satisfied you are with the explanation, so we can improve the results.

You can also have code explained in the context of a merge request. To explain code in a merge request:

- On the left sidebar, select Search or go to and find your project.

- Select Code > Merge requests, then select your merge request.

- On the secondary menu, select Changes.

-

On the file you would like explained, select the three dots () and select View File @ $SHA.

A separate browser tab opens and shows the full file with the latest changes.

- On the new tab, select the lines that you want to have explained.

- On the left side, select the question mark (). You might have to scroll to the first line of your selection to view it. This sends the selected code, together with a prompt, to provide an explanation to the large language model.

- A drawer is displayed on the right side of the page. Wait a moment for the explanation to be generated.

- Provide feedback about how satisfied you are with the explanation, so we can improve the results.

We cannot guarantee that the large language model produces results that are correct. Use the explanation with caution.

Summarize issue discussions with Discussion summary Ultimate SaaS Experiment

Introduced in GitLab 16.0 as an Experiment.

To use this feature:

- The parent group of the issue must:

- Enable the experiment and beta features setting.

- You must be a member of the project with sufficient permissions to view the issue.

You can generate a summary of discussions on an issue:

- In an issue, scroll to the Activity section.

- Select View summary.

The comments in the issue are summarized in as many as 10 list items. The summary is displayed only for you.

Provide feedback on this experimental feature in issue 407779.

Data usage: When you use this feature, the text of public comments on the issue are sent to the large language model referenced above.

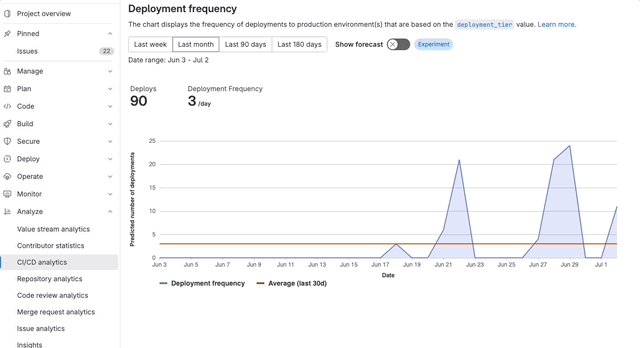

Forecast deployment frequency with Value stream forecasting Ultimate All offerings Experiment

Introduced in GitLab 16.2 as an Experiment.

To use this feature:

- The parent group of the project must:

- Enable the experiment and beta features setting.

- You must be a member of the project with sufficient permissions to view the CI/CD analytics.

In CI/CD Analytics, you can view a forecast of deployment frequency:

- On the left sidebar, select Search or go to and find your project.

- Select Analyze > CI/CD analytics.

- Select the Deployment frequency tab.

- Turn on the Show forecast toggle.

- On the confirmation dialog, select Accept testing terms.

The forecast is displayed as a dotted line on the chart. Data is forecasted for a duration that is half of the selected date range. For example, if you select a 30-day range, a forecast for the following 15 days is displayed.

Provide feedback on this experimental feature in issue 416833.

Root cause analysis Ultimate SaaS Experiment

Introduced in GitLab 16.2 as an Experiment.

To use this feature:

- The parent group of the project must:

- Enable the experiment and beta features setting.

- You must be a member of the project with sufficient permissions to view the CI/CD job.

When the feature is available, the “Root cause analysis” button will appears on a failed CI/CD job. Selecting this button generates an analysis regarding the reason for the failure.

Summarize an issue with Issue description generation Ultimate SaaS Experiment

Introduced in GitLab 16.3 as an Experiment.

To use this feature:

- The parent group of the project must:

- Enable the experiment and beta features setting.

- You must be a member of the project with sufficient permissions to view the issue.

You can generate the description for an issue from a short summary.

- Create a new issue.

- Above the Description field, select AI actions > Generate issue description.

- Write a short description and select Submit.

The issue description is replaced with AI-generated text.

Provide feedback on this experimental feature in issue 409844.

Data usage: When you use this feature, the text you enter is sent to the large language model referenced above.

GitLab Duo Chat Ultimate SaaS Beta

For details about this Beta feature, see GitLab Duo Chat.

Language models

| Feature | Large Language Model |

|---|---|

| Git suggestions | Vertex AI Codey codechat-bison

|

| Discussion summary | Vertex AI Codey text-bison

|

| Issue description generation | Anthropic Claude-2

|

| Code Suggestions | For Code Completion: Vertex AI Codey code-gecko For Code Generation: Anthropic Claude-2

|

| Test generation | Vertex AI Codey text-bison

|

| Merge request template population | Vertex AI Codey text-bison

|

| Suggested Reviewers | GitLab creates a machine learning model for each project, which is used to generate reviewers View the issue |

| Merge request summary | Vertex AI Codey text-bison

|

| Code review summary | Vertex AI Codey text-bison

|

| Vulnerability summary | Vertex AI Codey text-bison Anthropic Claude-2 if degraded performance

|

| Vulnerability resolution | Vertex AI Codey code-bison

|

| Code explanation | Vertex AI Codey codechat-bison

|

| GitLab Duo Chat | Anthropic Claude-2 Vertex AI Codey textembedding-gecko

|

| Root cause analysis | Vertex AI Codey text-bison

|

| Value stream forecasting | Statistical forecasting |

Data usage

GitLab AI features leverage generative AI to help increase velocity and aim to help make you more productive. Each feature operates independently of other features and is not required for other features to function. GitLab selects the best-in-class large-language models for specific tasks. We use Google Vertex AI Models and Anthropic Claude.

Progressive enhancement

These features are designed as a progressive enhancement to existing GitLab features across our DevSecOps platform. They are designed to fail gracefully and should not prevent the core functionality of the underlying feature. You should note each feature is subject to its expected functionality as defined by the relevant feature support policy.

Stability and performance

These features are in a variety of feature support levels. Due to the nature of these features, there may be high demand for usage which may cause degraded performance or unexpected downtime of the feature. We have built these features to gracefully degrade and have controls in place to allow us to mitigate abuse or misuse. GitLab may disable beta and experimental features for any or all customers at any time at our discretion.

Data privacy

GitLab Duo AI features are powered by a generative AI models. The processing of any personal data is in accordance with our Privacy Statement. You may also visit the Sub-Processors page to see the list of our Sub-Processors that we use to provide these features.

Data retention

The below reflects the current retention periods of GitLab AI model Sub-Processors:

- Anthropic retains input and output data for 30 days.

- Google discards input and output data immediately after the output is provided. Google currently does not store data for abuse monitoring.

All of these AI providers are under data protection agreements with GitLab that prohibit the use of Customer Content for their own purposes, except to perform their independent legal obligations.

Telemetry

GitLab Duo collects aggregated or de-identified first-party usage data through our Snowplow collector. This usage data includes the following metrics:

- Number of unique users

- Number of unique instances

- Prompt lengths

- Model used

- Status code responses

- API responses times

Training data

GitLab does not train generative AI models based on private (non-public) data. The vendors we work with also do not train models based on private data.

For more information on our AI sub-processors, see:

- Google Vertex AI Models APIs data governance and responsible AI.

- Anthropic Claude’s constitution.

Model accuracy and quality

Generative AI may produce unexpected results that may be:

- Low-quality

- Incoherent

- Incomplete

- Produce failed pipelines

- Insecure code

- Offensive or insensitive

- Out of date information

GitLab is actively iterating on all our AI-assisted capabilities to improve the quality of the generated content. We improve the quality through prompt engineering, evaluating new AI/ML models to power these features, and through novel heuristics built into these features directly.